This month’s Brainstorm asks what design changes must be made in order for wearables to become adopted by the general population (Brainstorm, page 24). The consensus?

This month’s Brainstorm asks what design changes must be made in order for wearables to become adopted by the general population (Brainstorm, page 24). The consensus?

Smaller, longer lasting batteries. While I don’t contest these answers, the reason that I don’t wear my Fitbit has nothing to do with its size or charging requirements – it was comfortable on my wrist, water-resistant, and since I charge my phone every night, I had no problem plugging in one more device.

My issue was that the wearble didn’t provide me with any real benefit. Although I quickly jumped on the bandwagon, as my dad and sister decided we would compete to see who could get the most steps on any given day (we are a wildly competitive family), the novelty soon wore off, and I removed my Fitbit for good.

What my Fitbit did tell me was that I really don’t walk that much some days (so is the life in a cubicle) and I really don’t sleep that soundly at night. But I knew these behaviors already.

The wearable just made it difficult to deny. Apparently walking to the coffee machine and back doesn’t force me up and around as much as I had hoped (even though it happens regularly, all day, because of the aforementioned sleep issues and general caffeine addiction).

My other qualm: It didn’t give me any credit for going to yoga but my dad racked up his steps by simply rocking in his La-Z-Boy. Needless to say, my dad still wears his, although he did take a short hiatus from the tech in a period of frustration, as he has less patience with the device’s charging time.

According to a recent report by Pew Research Center, 83 percent of experts say wearable technology will have a “widespread and beneficial effect” on the public by 2025. While I don’t doubt the consensus, I don’t think this “beneficial effect” will be the ability to count how many steps we take in a given day.

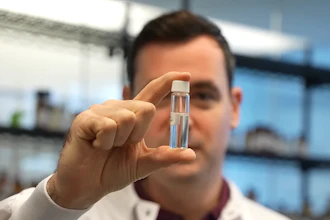

Recently, I had to wear a Holter monitor, a medical device that continuously recorded my heart's rhythms for 24 hours (don’t worry, I am quite positive any issues stem from my aforementioned coffee consumption).

However, I was able to avoid a long stay in a hospital bed because of this technology. Although the technology is not new (Norman J. Holter invented telemetric cardiac monitoring in 1949) it is an example of where wearables have an opportunity to really improve quality of life.

The device let me go along about my day as normal, but it was very uncomfortable and bulky. Simple tape was still used to secure the electrodes to my skin – apparently some instances offer no high-tech solutions.

My issues with consumer wearables persist, and I remain hesitant about the many expert reports that predict our future. Yet any time I find myself feeling doubtful about impending technology, I remember that my grandfather grew up nothing during the Great Depression, and now he can FaceTime with his grandchildren on his new iPhone. That is pretty amazing.

What do you think? Will wearables change our lives for the better? Email me at [email protected].

This blog originally appeared in the May 2015 print edition of PD&D.