A woman in Arizona was tragically hit and killed by a self-driving car on Sunday. The vehicle, part of Uber’s autonomous driving test fleet, was in self-driving mode at the time of the crash and had an emergency backup driver behind the wheel. It was the first fatal accident involving a self-driving car and a pedestrian.

As a response to the incident, Uber suspended all further testing of autonomous vehicles and promised its full cooperation and assistance in the investigation of the accident. Regardless of the outcome of this investigation, the crash is certain to reignite public debate about self-driving technology, its risks and the legal and ethical challenges involved.

Unsurprisingly, safety and the ability of self-driving cars to avoid mistakes are among the biggest concerns of people opposed to autonomous vehicles, as a report published by the Boston Consulting Group in 2016 showed. Convincing people of the safety and fail-proofness of self-driving technology will be one of the biggest challenges for all companies involved going forward. And while proponents argue that artificial intelligence will ultimately make driving safer — last year more than 37,000 people in the United States died in traffic-related accidents — any accident involving an autonomous vehicle must be considered a major setback on the road to a (potentially) driverless future.

Consumer Concerns About Self-Driving Cars

This chart shows some of the main concerns that consumers have with respect to self-driving cars.

Mar 21, 2018

Read Next

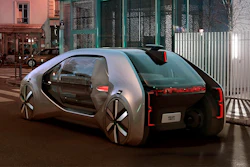

Latest in Automotive

Ford to Cut Up to 1,000 Jobs at German Plant as EV Demand Lags

September 16, 2025

It's 'Do or Die' for EV Maker Rivian

September 16, 2025

Ford Moving World Headquarters for First Time in 70 Years

September 15, 2025