Over the last few years, augmented reality (AR) technology and its application have been progressing in leaps and bounds. A couple of years ago, the AR application patterns were broadly along the lines of:

Over the last few years, augmented reality (AR) technology and its application have been progressing in leaps and bounds. A couple of years ago, the AR application patterns were broadly along the lines of:

- A pop-up virtual object on a 2D marker — mostly used in marketing, product branding and DIY (do it yourself) apps

- What’s inside the box — AR based step-by-step instructions for unwrapping a package and seeing the virtual components inside

- A virtual fitting room — for virtually checking the fitment of body accessories like watches, jewelry and apparel

- Virtual visualizations — mostly used for architecture planning and layouts

- Information overlays — ranging across widespread applications like citizen-information on geo-points-of-interests. These were covering all kinds of content mash-ups (text, images, videos and audio) on geo-tags.

The first wave was mostly exploratory in nature, and looking back, quite simple compared to the current applications trends. The early adoption was oriented towards wowing the customers in product marketing or familiarizing consumers on the product features or user training in field services. Although adoption started around marketing, it quickly graduated to other process areas as well, such as product design, product installation and usage. The adoption also extended to industry segments like education, utilities, travel, manufacturing and healthcare.

Technologically, AR evolved from simple 2D-marker-based, to geo-tagged and then to natural-marker-based platforms. And from the device perspective, it has evolved from mobile handhelds to eye-wearables like the Google Glass.

Doing some crystal-gazing into the future, I see AR leap-frogging into the next level of sophistication. Interestingly the common factor seen is AR being integrated with other cutting edge combination technologies to produce interesting new applications in manufacturing. Here is a look at a select few of these mashed-up applications. [Note: I have kept AR and Virtual Reality interchangeably for my viewpoint below]

AR and collaboration

In the first wave of field-service applications, we saw a solo worker being augmented with information rendered into his/her mobile-screens. Now it has integrated with unified collaboration technologies and widened into a collaboration network with back-office and co-workers. The data is more real-time streaming, content rich and interactive. This has enhanced the productivity between the remote-worker at his or her work location and back office support teams.

We see some of these adoptions already happening across various industries. For instance, we see early adoption taking place in virtual collaboration for automotive design and in remote healthcare delivery.

Context-aware AR

To extend the above illustration, context-aware AR is another development we observe due to advancements in semantic data and location awareness technologies. For example, in an airport maintenance service work scenario, the prime live-streaming video from the solo worker at the work-spot is used to give contextualized views to each role supporting him. The plumber gets the plumbing-line views, the electrician gets the electrical-line views, the civil-engineer gets the structural engineering views and so on. Additionally, each view is highly personalized, customized and annotated for each collaborator’s context. The innovative new UX frameworks (based on AR), will have higher affordance and closer mapping to users’ mental model.

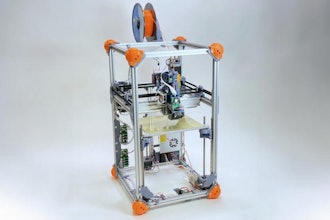

AR and 3D printing

Another interesting development is the intersection of the virtual world AR and the real-world of 3D printing. Until recently, 3D printing had been using CAD-CAM generated design as its main input. This made the design more or less a “static,” predefined approach. Now with combination technology, the design is generated and provider on-the-fly and altered more real-time. It’s like watching a sculptor work in a virtual space to produce a real-life sculpture. (A recent good example from Meta can be found at http://www.youtube.com/watch?feature=player_embedded&v=aypM1qaWPck.)

AR and immersive visualizations

The intersection of AR with big data, data visualization and simulation technologies can transform plain numbers into compelling stories. Simple data and statistics can be enhanced when an augmented reality layer is placed over a real-time streaming data. The data-analytics world has been mostly seen the world of data-visualizations and touch-interactiveness. It has been mostly a 2D world till now. But with AR, one gets into a more immersive world with interactive data-blobs around ones view.

To name a few application examples:

- In a factory layout, an AR data visualization interface could automatically detect target equipment and visualize necessary data relevant to that particular piece of equipment.

- A store manager could get information about retail operations at the point of retail itself — with the necessary details of transactions, statistics and comparisons between neighboring stores — augmented onto his view.

- Inspection of a factory floor or outlet could be as simple seeing the functional state of machines, number of jobs completed or sales figures augmented onto a visor, or even presented as a voice-over, while one moves through the area of interest.

Technologies like eye-wearables, number-crunching servers and data-modeling need to advance much more before we see practical and affordable adoption of these in day-to-day scenarios.

AR and Neuro-gaming

To stretch a bit into the future, interesting innovations are happening in the intersection of neuro-gaming and AR. Gaming which has been mostly about natural-senses simulation like (motion, gesture, eye tracking and facial recognition) can now be integrated directly with neuro-simulations (augmented reality, virtual reality, haptics) for interesting new sense-mashups. This will take its time for adoption and safety certifications. Adoption, predominantly, is starting with gaming, but definitely spreading into education, sports, design rooms, factory floors and healthcare. It will be worthwhile to wait and watch to see the wider adoption of this segment as AR is being taken de-facto into any future user experience.

Paul currently holds the chief technologist role for the Manufacturing & Hitech SBU of Wipro and his focus is to apply emerging technologies in the manufacturing process areas. He also anchors co-innovation programs with Customers and Academia. In his past 20+ years of ICT career, he has been across the technology waves of Client-Server, Web and Digital. He has played various techno-functional roles and has accomplished many first-of-a-kind projects for Wipro’s clients across industry sectors.